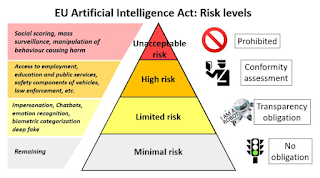

BANNED – social scoring, face recognition, dark patterns, manipulation

High risk – recruitment, employment, justice, law, immigration

Limited risk – chatbots, deep fakes, emotion recognition

Minimal risks – spam filters, computer games

But, as usual, the danger is in over reach. That can take place in several ways:

Catch-all

Like an oversized fishing net, the ban on biometric data may have the unintended consequences of banning useful applications such as deepfake detection, age recognition in child protection, detecting children in child porn, accessibility features, safety applications, healthcare applications and so on. Innovation often produces benefits that were unforeseen at the start. We have already seen a Cambrian explosion of innovation from this technology, category bans are therefore unwise. I fear it is too late on this one.

Core tech not applications

Rather than focus on actual applications, they have an eye on general purpose AI and foundational models. This is playing with fire. By looking for deeper universal targets they may make the same mistake as they did over consent and lose millions of hours of lost productivity as we all have to deal with ‘manage cookies’ pop-ups and no one ever reads consent forms. An attack on, say foundational ‘open source’ systems, would be a disaster. Yet it is hard to see how open source could be allowed. It has an ill-defined concept of a 'frontier model' in the proposed legislation that could wipe out innovation in one blow. No one knows what it means.

Complexity

It hauls in the Digital Services Act (DSA), Digital Markets Act (DMA), General Data Protection Regulation (GDPR), as well as the new regulation on political advertising, as well as the Platform Work Directive (PWD) – are you breathless while reading that sentence? It could become an octopus with tentacles that reach out into all sorts areas making it impossible to interpret and police.

Copyright

Signs of the EU jumping the gun here and not in a good way. There is always the danger of some publishers (we know who you are) lobbying for restrictions on data for training models. This would put the EU at a huge disadvantage. To be honest, the EU is so far behind the curve now on foundational AI that it will make little difference but a move like this will condemn the EU to looking on at a two horse race, with China and the US racing round the track while the EU remains locked up in its own stalls.

Concrete

One problem with EU law is its fixity. Once mixed and set, it is like concrete – hard and unchangeable. Unlike common law, such as exists in England, US, Canada and Australia, where things are less codified and easier to adapt to new circumstances, EU Roman Law is top down and requires new changes in the law through legislative acts. If mistakes are made, you can’t fix them.

Conceit

At only 5.8% of the world’s population, there is the illusion that it speaks for the whole world. Having been round the world this year in several continents - I have news for them – it doesn’t. Certainly not for the US, and as for China, not a chance. It doesn’t even speak for Europe, as several countries are not in the EU. To be fair, one shouldn’t design laws that pander to other states but in this case Europe is so far behind that this may be the final n-AI-l in the coffin. Some think millions, of not trillions of dollars are a stake in lost innovation and productivity gains. I hope not.

We had a taste of all this when Italy banned ChatGPT. They relented when they saw the consequences. I hope the EU apply Occam’s razor, the minimum number of entities to reach their stated goals, unfortunately they have form here, of overregulating.

No comments:

Post a Comment