The AI frenzy hasn’t lessened since OpenAI launched ChatGPT. The progress, widening functionality and competition has been relentless, with what sounds like the characters from a new children’s puppet show - Bing, Bard, Ernie and Claude. This brought Microsoft, Google, Baidu and Anthropic into the race, actually a two horse race, the US and China.

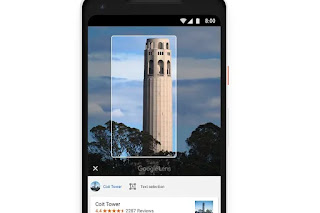

It has accelerated the shift from search to chat. But Google responded with Bard, the Chinese with Ernie’s impressive benchmarks and Claude has just entered the race with a 100k document limit and cheaper prices. They are all expanding their features but one particular thing did catch my eye and that was the integration of ‘Google Lens’ into Bard, from Google. Let’s focus on that for a moment.

Context matters

Large Language Models have focused on text input, as the dialogue or chat format works well with text prompting and text output. They are, after all, ‘language’ models but one of the weaknesses of such models is their lack of ‘context’. Which is why, when prompting, it is wise to describe the context within your prompt. It has no world model, doesn’t know anything about you or the real world in which you exist, your timelines, actions and so on. This means it has to guess your intent just from the words you use. What it lacks is a sense of the real world, to see what you see.

Seeing is believing

More than meets the eye

OK, so large language models can now see and there’s more than meets the eye in that capability. This has huge long-term possibilities and consequences, as this input can be used to identify your intent in more detail. The fact that you are pointing your phone at something is a strong intent, that the object or place is of real, personal interest. That, with data about where you are, where you’ve been, even where you’re going, all fills out your intention.

This has huge implications for learning in biology, medicine, physics, chemistry, lab work, geography, geology, architecture, sports, the arts and any subject where visuals and real world context matters. It will know, to some degree, far more about your personal context, therefore intentions. Take one example, healthcare. With Google Lens one can see how skin, nails, eyes, retinas, eventually movements can be used to help diagnose medical problems. It has been used to fact check images, to see if they are, in fact, relevant to what is happening on the news. One can clearly see it being useful in a lab or in the field, to help with learning through experiments or inquiry. Art objects, plants, rocks can all be identified. This is an input-output problem. The better the input, the better the output.

Performance support

Just as importantly, learning in the workplace is a contextualised event. AI can provide support and learning relevant to actual workplaces, airplanes, hospital wards, retail outlets, factories, alongside machines, in vehicles and offices - the actual places where work takes place - not abstract classrooms.

In the workplace, learning at the point of need for performance support can now see the machine, vehicle, place or object that is the subject of your need. Problems and needs are situated and so performance support, providing support at that moment of need, as pioneered by the likes of Bob Mosher and Alfred Remmits, can be contextualised. Workplace learning has long sought to solve this problem of context. We may well be moving towards solving this problem.

Moving from 2D to 3D virtual worlds

Moving into virtual world, my latest book, out later this year, argues that AI has accelerated the shift from 2D to 3D worlds for learning. Apple may not use the words ‘artificial’ or ‘intelligence’ but its new Vision Pro headset, which redefines computer interfaces, is packed full of the stuff, with eye, face and gesture tracking. Here the 3D world can be recognised by generative AI to give more relevant learning in context, real learning by doing. Again context will be provided.

Conclusion

Generative AI was launched as a text service but it quickly moved into media generation. It is now opening its eyes, like Frankenstein awakening to the world. There is often confusion around whether Frankenstein was the creator or created intelligence. With Generative AI, it is both, as we created the models but it is our culture and language that is the LLM. We are looking at ourselves, the hive mind in the model. Interestingly, if AI is to have a world view we may not want to feed it such a view, like LLMs, we may want it to create a world view from what it experiences. We are making steps towards that exciting, and slightly terrifying, future.

No comments:

Post a Comment