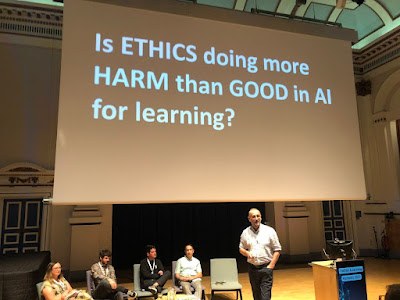

I put this to an audience of Higher Education professionals at an Online Learning Conference yesterday at Leeds University.

I have an amazing piece of technology I’ve invented. It will bring astonishing levels of autonomy, freedom and excitement to billions of people. But here’s the downside, 1.4 million people will die horrible, bloody, sometimes mangled deaths every year, with another couple of million maimed and injured. This World War level of casualties, will strike every year, and is the price you have to pay. Would you say YES or NO?

Most rational souls would say NO. But let me reveal that technology – the automobile. We have come to an accommodation with the technology, as the benefits outweigh the downsides. Al may even bring in the self-driving car. My point is that we rush to judgement, as we are amateur ethicists and rely on gut feel, not reason.

This whole area, ethics, is oddly subject to a huge amount of bias as it is such an emotive subject. It plays to people's fears and prejudices, so objectivity is rare. Add new technology to the mix, along with a pile of stories in social media and you have a cocktail of wrong-headed certainty and exaggeration.

1. Deontological v Utilitarian

The offer I made at the start, I have put to many audiences. It is never taken up, as we are Utilitarians (calculating benefits against downsides) when it comes to actual decisions on using technology but dogmatic Deontologists (seeing morals as rules or moral laws) when it comes to thinking about ethics and technology.

I am a fan of David Hume’s Indirect Utilitarianism, refined by Harsanyi as preference Utilitarianism. For a good discussion on how this relates to ethical issue and AI, see Stuart Russell’s excellent book, Chapter 9, Human Compatible (2019), where he attempts to translate this into achievable, controlled but effective AI. Curiously, Hume found himself cancelled by a few morally deluded students at the University of Edinburgh recently and they removed his name from the building which housed the Department of Philosophy. This was doubling down on Religious Deontologists refusing him a Professorship in the 18th century when he was one of the most respected intellectuals in the whole of Europe. Both groups are deluded Deontologists. He remains, in my opinion, the finest of the English speaking philosophers.This tension has existed in ethical thinking since the Enlightenment.

In truth, most of what passes for Ethics in AI these days is lazy ‘moralizing’, moral high horses ridden by people with absolute certainty about their own values and rules, as if they were God-given. More than this they want to impose those rules on others. They call themselves ‘ethicists’ but it is thinly disguised activism, as there is no real attempt to balance the debate out with the considerable benefits. It’s an odd form of moral philosophy that only considers the downsides.

Google, Google Scholar, AI mediated timelines on almost all social media, the filtering out of harmful and pornography material into our email boxes, the protection of our bank accounts – all use AI. The future suggests that other huge near-term upsides in terms of learning, healthcare and productivity are well underway.

There is a big difference between ‘ethics’ and ‘moralising’. Even a basic understanding of ethics will reveal the complexity of the subject. We have thousands of years of serious intellectual debate around deontological, rights-based, duty-based, utilitarian and other ways of thinking about ethics. A pity we give it so little thought before passing judgement.

2. Duplicity

Thomas Nagel points out, in his book 'Equality and Partiality', that we often pronounce strong deontological, moral opinions but rarely apply them in our own behaviour. We talk a lot about, say climate change, but drive large cars and fly off regularly on vacation. We talk about the climate emergency in academia but fly off for conferences at the drop of a sunhat, don’t deliver learning online and believe in spending €28 billion flying largely rich students around Europe through Erasmus. You may want all of your AI to be fully ‘transparent’. That’s fine, but stop using Google and Google Scholar and almost every other online service as they all use AI and it is far from transparent. My favourite example are those who are happy to 'probe' my unconscious in 'unconscious bias' training but decry the use of student data in learning on htebgrounds of privacy!

I’m just back from Senegal, where my fellow debating colleague Michael, from Kenya, berated the white saviours for denying the opportunities that AI offers Africa. Denying young aspiring workers to do human reinforcement training pays above the average wage and gives people a step into IT employment. It’s bizarre, he says, for white saviours on 80k to see this as exploitation.

3. To focus on AI is to focus on the wrong problem

Rather than climate change, the possibility of nuclear war, a demographic time bomb or increasing inequalities – AI is getting it in the neck, yet it may just solve some of these real and present problems. In particular, it may well increase productivity, democratise education and dramatically reduce the costs of healthcare. These are upsides that should not be thwarted by idle speculation.

At its most extreme, this speculation, that 'AI will lead to extinction of the human species' seems to have turned into the Doomsday tail that wags the black dog, despite the fact there is no evidence at all that this is possible or likely. Focus on what is likely not the fear-mongering that caught your attention on Twitter.

4. New technology always induces an exaggerated bout of ethical concern

Every man, women their uncle, aunt and dog, is an armchair ethicist but this is hardly new. It was ever thus. Plotus made the same point about the sundial in the 3rd century:

The gods confound the man who first found out how to distinguish hours!

Confound him too who in this place set up a sundial to cut and hack my days so wretchedly

into small portions!

Plato thought writing would harm learning and memory in the Phaedrus, the Catholic Church fought the printing press (we still idiotically teach Latin in schools), travelling in trains at speed was going to kill us, rock ‘n roll spelled the end of civilisation, calculators would paralyse our ability to do arithmetic, Y@K was going to cause the world to implode, computer games would turn us into violent psychopaths, screen time would rot the brain, the internet, Wikipedia, smartphones, social media… now AI.

As Stephen Pinker righty spotted a predictable combination of negativity and confirmation bias leads to a predictable reaction to any new technology. This inexorably leads to an over-egging of ethical issues as they confirm your initial bias.

5. Fake distractive ethics

Curiously, much of the language and examples in the layperson’s mind, has come from shallow and fake news, which is actually a real concern in AI, with deep fakes. Take the famous NYT article where the journalist claimed ChatGPT had told him to leave his wife. On further reading it shows he had prompted it towards this answer. If some stranger in a bar dropped you the line that his marriage was on the rocks, you’d put a significant bet on him being right to leave his wife. ChatGPT was actually on the money. It was a classic GIGO, Garbage In: Garbage Out, poke the bear story. Then there was that AI guided missile that supposedly returned back and hunted down its launcher - never happened – complete fake. The endless stream of clickbait ‘look it can’t do this’, mostly using ChatGPT 3.5 (a bit like using Wikipedia circa 2004), flooded social media. This is the worst AI will ever be but hey, let’s not consider the fact that first release technology almost always leads to dramatic improvement. Think long-term folks before using short-term clickbait to make judgements.

6. Argument from authority

Then there is the argument from authority. I’m a Professor say people in strongly worded letter to the world, therefore I must be right. Two things matter here, domain experts often have a lousy track record and a lack of expertise in philosophy, moral philosophy, the history of technology, politics and economics. To be fair experts in AI are worth listening to as they understand what is often difficult to understand and opaque technology. Generative AI, in particular, is difficult to comprehend, in terms of both what it is, how it works and why it works. It confounds even AI experts. But they are not experts on politics, ethics or regulation.

The letters that appeared in both 2015 and 2023, pushed by Tegmark’s Future of Life Institute (whose role is ethical oversight), use the argument from authority. We’re academics, we know what’s right for you the masses. It demanded that we immediately stop releasing AI for six months until the regulators caught up – a ridiculous and naive request that showed their political, economic and social naivety. I dislike this ‘letter writing’ lobbying. First it had names of people who demanded they be taken off the list as they had not given permission and some have since rescinded the statement but authority alone is never enough.

Conclusion

This tsunami of shallow moralising is almost perfectly illustrated in Higher Education, where most of the debate around ethics has focused on plagiarism, when the actual problem is crap assessment. There is little consideration of the huge upsides and benefits for teachers and students alike. Learning, in my view is the biggest beneficiary of this new form of AI, health care second. Hundreds of millions are already using it to learn.

On climbing into personal pulpits, we may fail to realise the benefits in learning. Personalised learning, allowing any learner to learn anything, at any time from any place is becoming a reality. Functioning, endlessly patient tutors, that can teach any subject at any level in any language are on the horizon, universal teachers with a degree in any subject and driven by good learning science and pedagogy. The benefits for inclusion and accessibility are enormous, as its potential to teach in any language.

It is not that there are no ethical problems just that objective ethical debate is harmed when it becomes enveloped in a culture of absolute values and intolerant moralising. For every ethical problem that arises, there seems to be glib answers that are simple, confidently pronounced and often wrong.

No comments:

Post a Comment